Feb 22, 2026

Bounded Drift Architectures

Oliego

AI

Thereom

Future

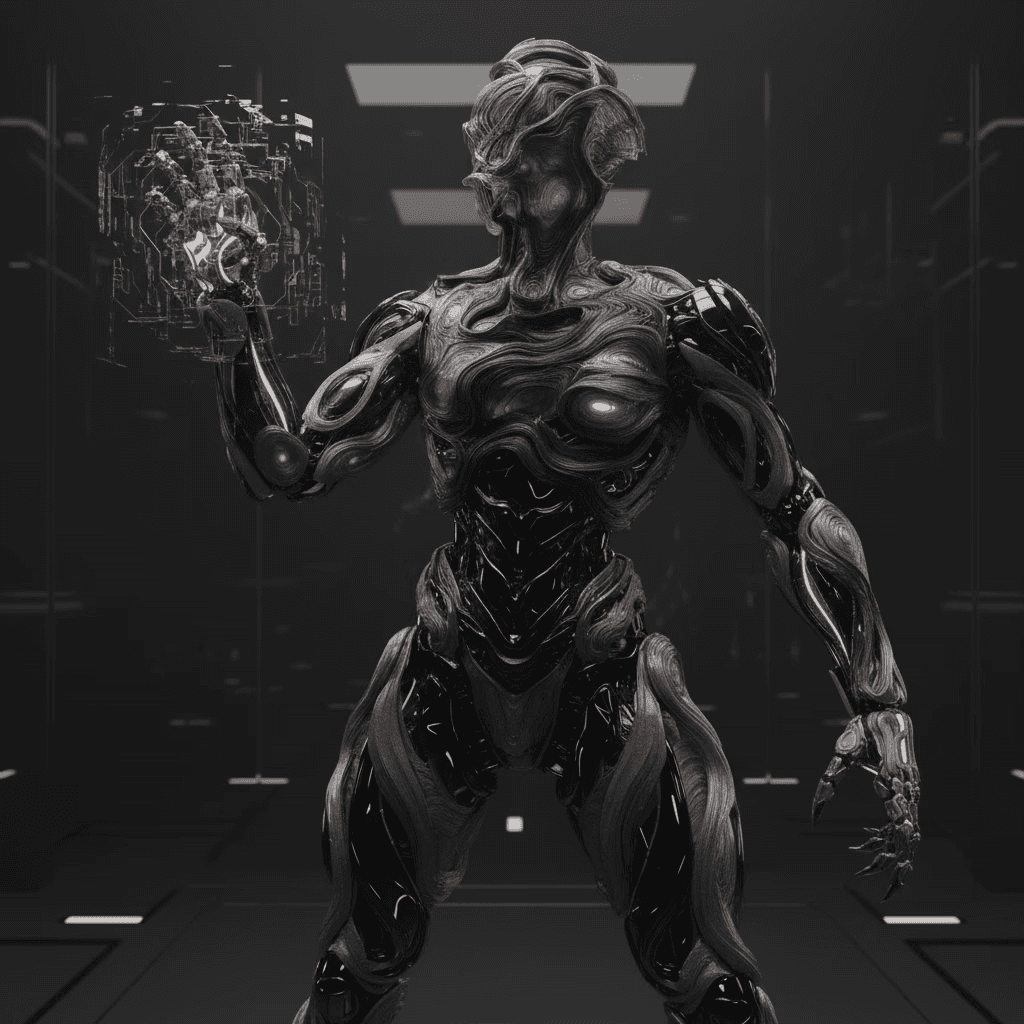

Bounded Drift Architectures

A theorem by Emmanuel Ibe II

What this Theorem Actually Says — in Plain English

The Core Idea

Most AI systems learn by chasing a target. They measure how wrong they are and keep adjusting until they get it right. Bounded Drift Architecture (BDA) works differently. Instead of chasing a fixed target, it asks a simpler question: am I still within acceptable territory? If yes, keep going. If no, snap back. Think of it like a ship with a GPS boundary rather than a destination. The crew can navigate freely as long as the ship stays inside the designated zone.

The Zone (Environmental Manifold)

The "zone" is a mathematically defined region in the space of all possible AI configurations. Any configuration inside the zone is considered coherent with the environment, it produces outputs that are close enough to what the real world expects. The key insight is that this zone is convex: it has no holes or dents. That geometric property is what makes the mathematics tractable and the guarantees provable.

The Main Theorem: Faster Than You'd Expect

The headline result is about speed. Naively, if you have k specialist modules and an input of length n, you'd expect to do n × k units of work, checking every specialist for every input. BDA proves it can do better: only o(k) work per input token, which grows strictly slower than k itself.

Why? Because in practice, most specialist modules score very low for any given input. The math shows that when the top-scoring specialist is sufficiently more confident than the rest, the softmax weighting concentrates; like votes in a landslide election, only a logarithmic number of candidates ever really matter. So instead of querying all k specialists, you identify the handful of winners and evaluate only those. The rest contribute so little they can be safely ignored.

Stability Guarantees

Two other guarantees are proven. First, the system is self-correcting: if it ever drifts outside the zone, a projection step pulls it back; not eventually, but at an exponential rate, getting closer with each step. Second, the system is environmentally robust: if the environment itself shifts slightly (the world changes a little), the zone shifts by a proportionally small amount. A well-adapted system doesn't need to relearn from scratch; it just takes a small correction step.

What This Means in Practice

Taken together, these results describe an AI architecture that is fast, stable, and adaptive by design rather than by luck. Traditional systems optimize until something breaks; BDA stays inside bounds that guarantee coherence. It scales modularly , adding more specialist centers doesn't multiply cost linearly, and it handles shifting environments without catastrophic forgetting.

The analogy is a healthy organism rather than an optimization machine: not trying to be perfect, just staying coherent.

For the formal proofs, see: Bounded Drift Architectures - A Homeostatic Framework for Scale-Invariant Neural Systems, by Emmanuel Ibe II | Oliego Inc.

Contact us Directly for access to complete theorem and proofs - contact hello@oliego.com.